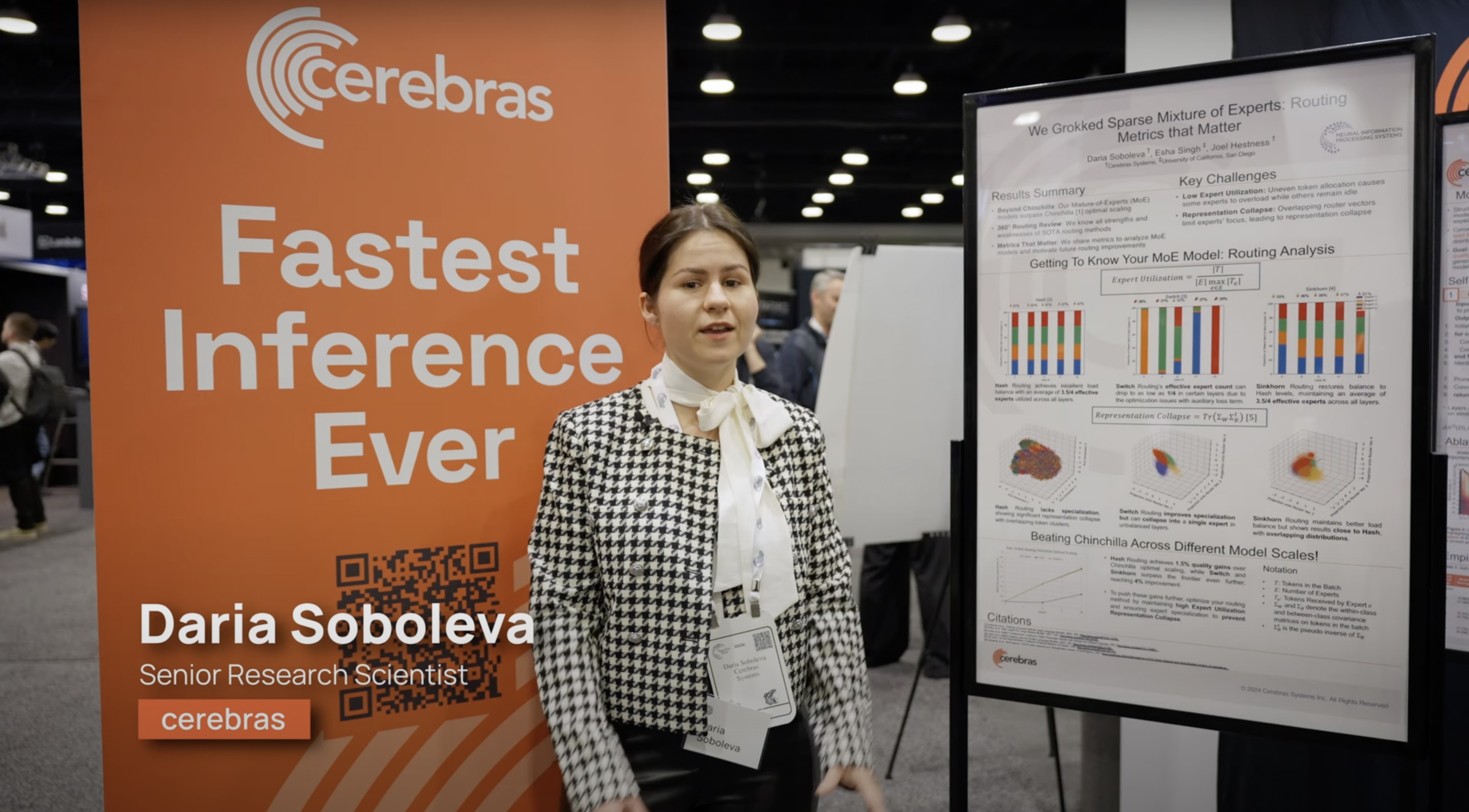

Daria Soboleva

Head Research Scientist at Cerebras

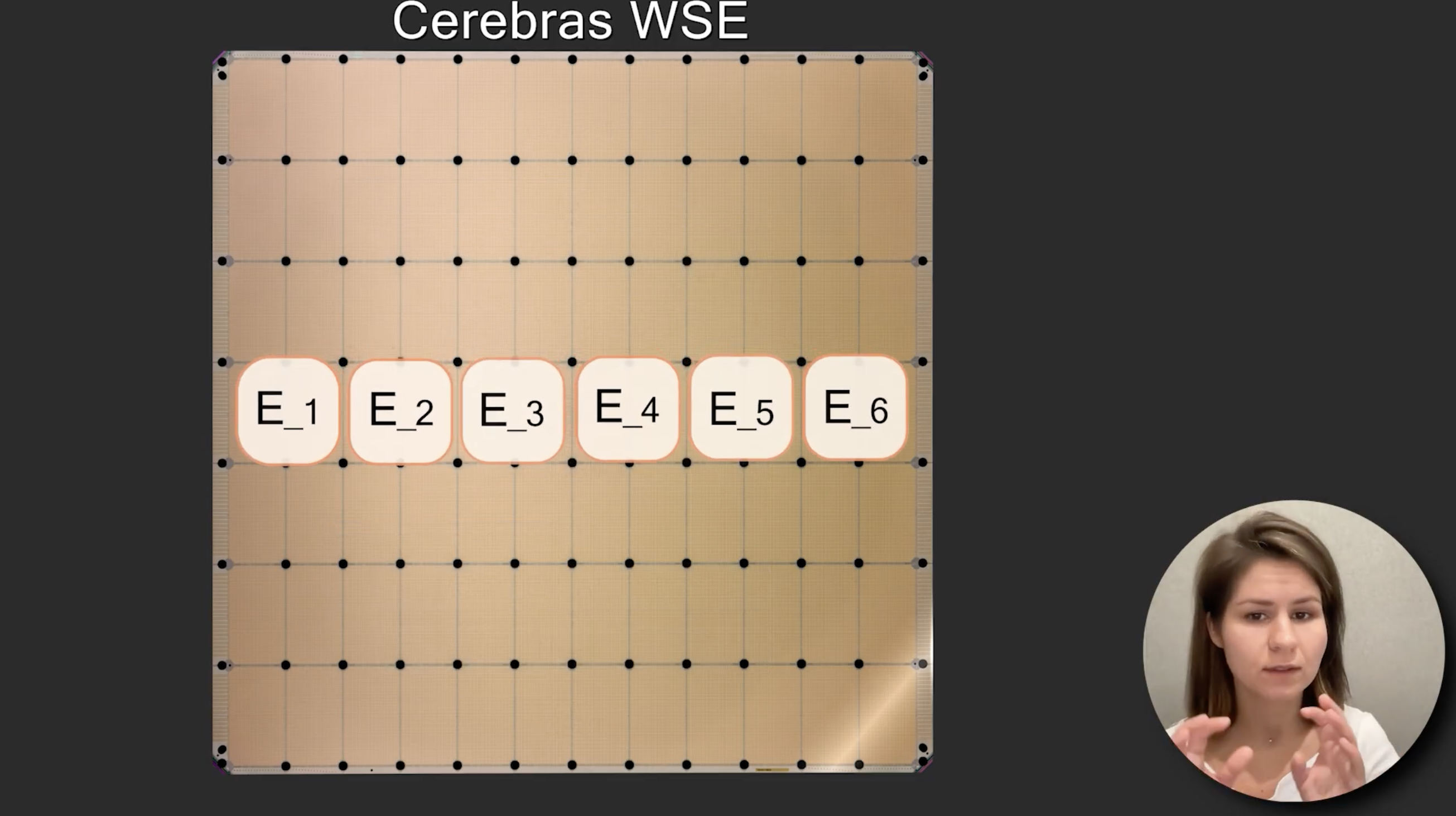

I'm a Head Research Scientist at Cerebras with nearly a decade of AI/ML experience. I designed MoE recipes from the ground up for Cerebras hardware and now lead training at unprecedented scale across hardware, software, and ML teams. From this hands-on work, I distill practical MoE recipes into The MoE 101 Guide.

My focus is building efficient, scalable AI systems end to end, spanning data, models, and infrastructure. This includes SlimPajama, a 627B-token dataset with over 1M downloads, and BTLM, a 3B model achieving 7B-class quality while using 3× less inference compute.

Previously, I built ML systems used by millions. At Yandex, I invented the YATI model that powers Yandex Search. At Google, I focused on improving Google Assistant's ASR and built models that were deployed in Google Captions and Google Gboard.

The MoE 101 Guide

Full course is available at: https://www.cerebras.ai/moe-guide

I regularly advise top AI labs on MoE architecture and training dynamics. For consulting inquiries or early-stage collaborations, please reach out via email: dariamsoboleva@gmail.com.

Professional services

Speaker, organizer, and moderator for both panels.

Featured talks

Recent publications

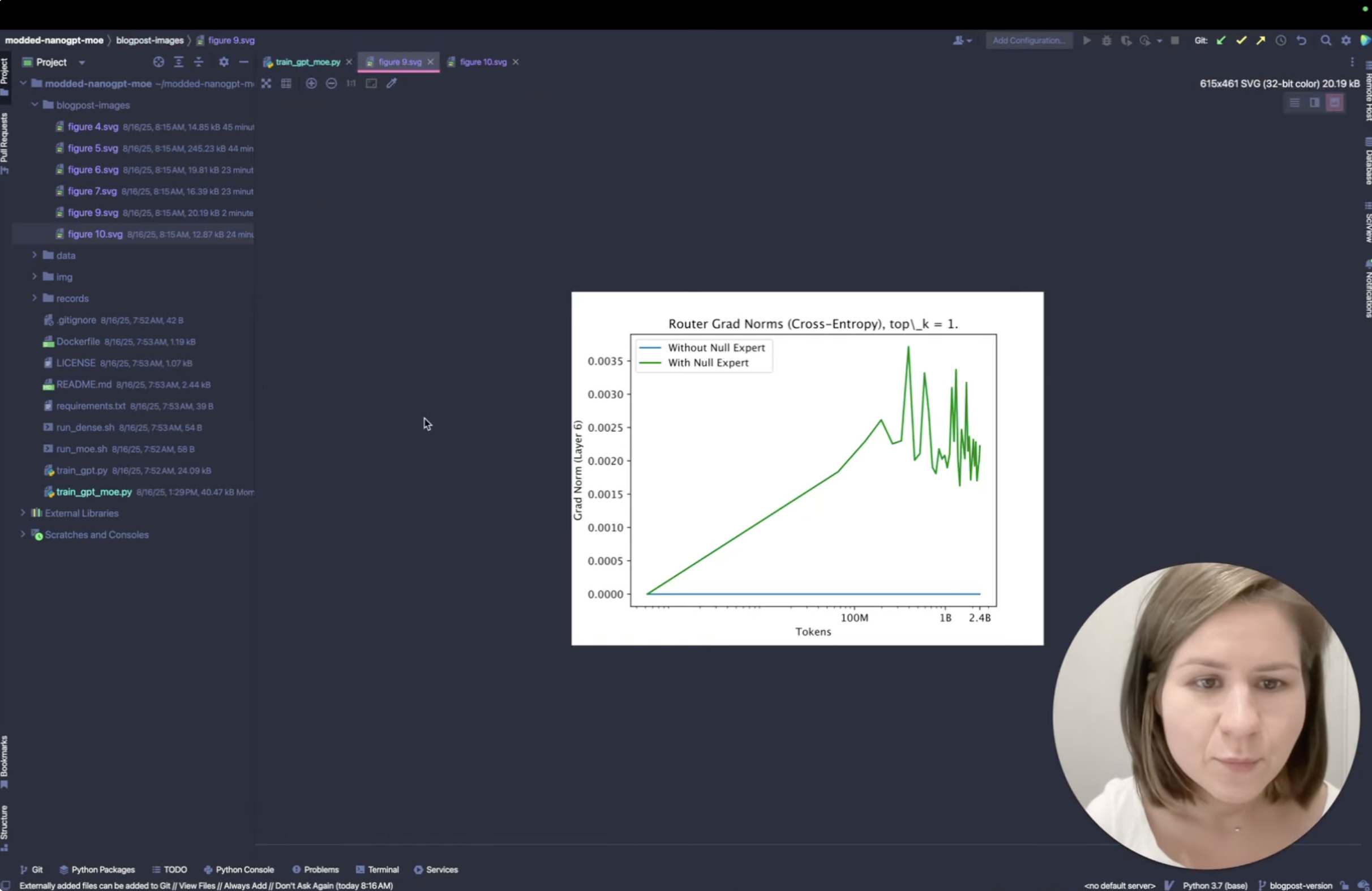

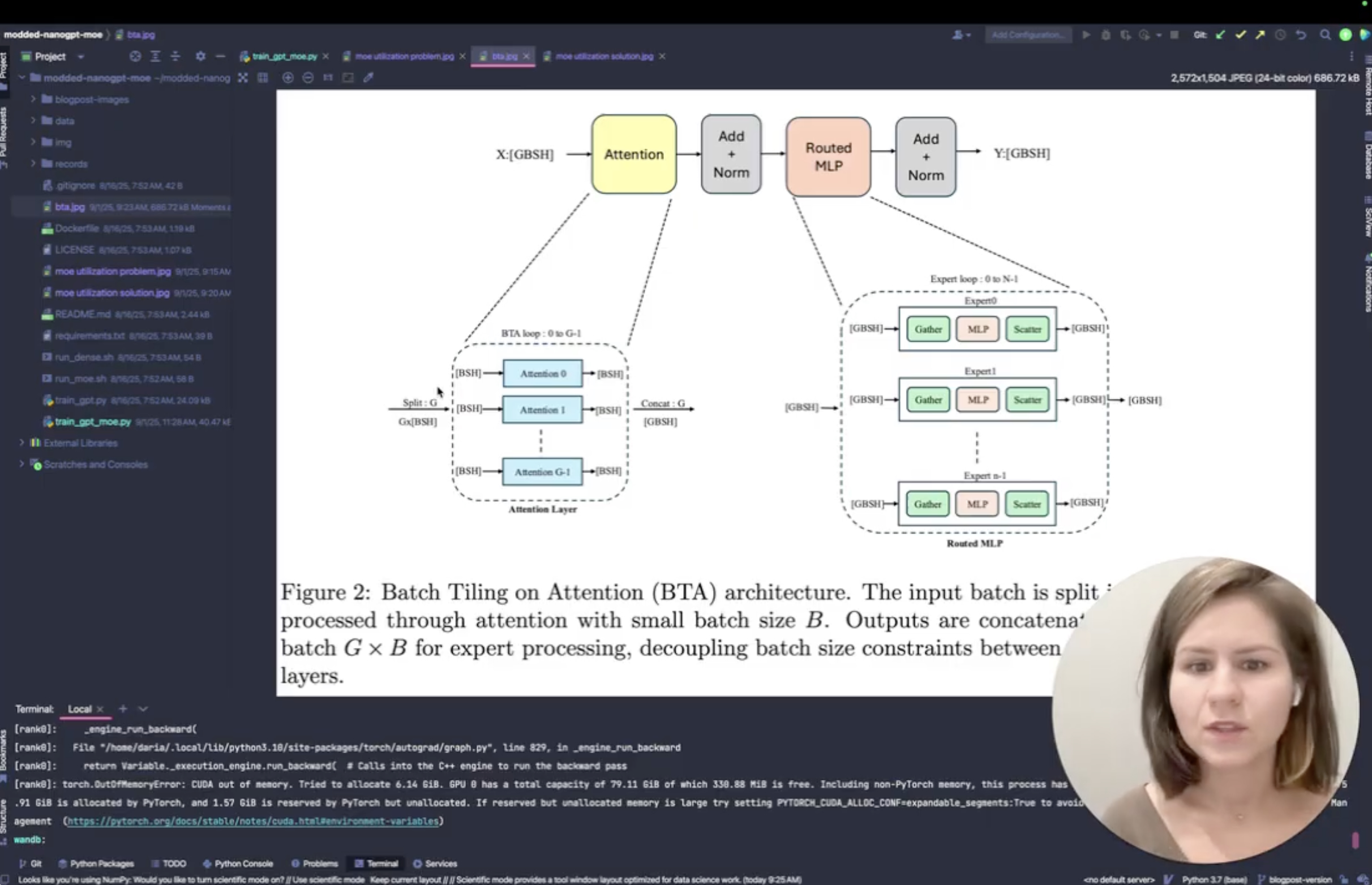

- Daria Soboleva, Etienne Goffinet, Hui Zeng, Sangamesh Ragate, Elif Albuz, Natalia Vassilieva, Batch Tiling on Attention: Efficient Mixture of Experts Training on Wafer-Scale Processors, SC, 2025 [paper]

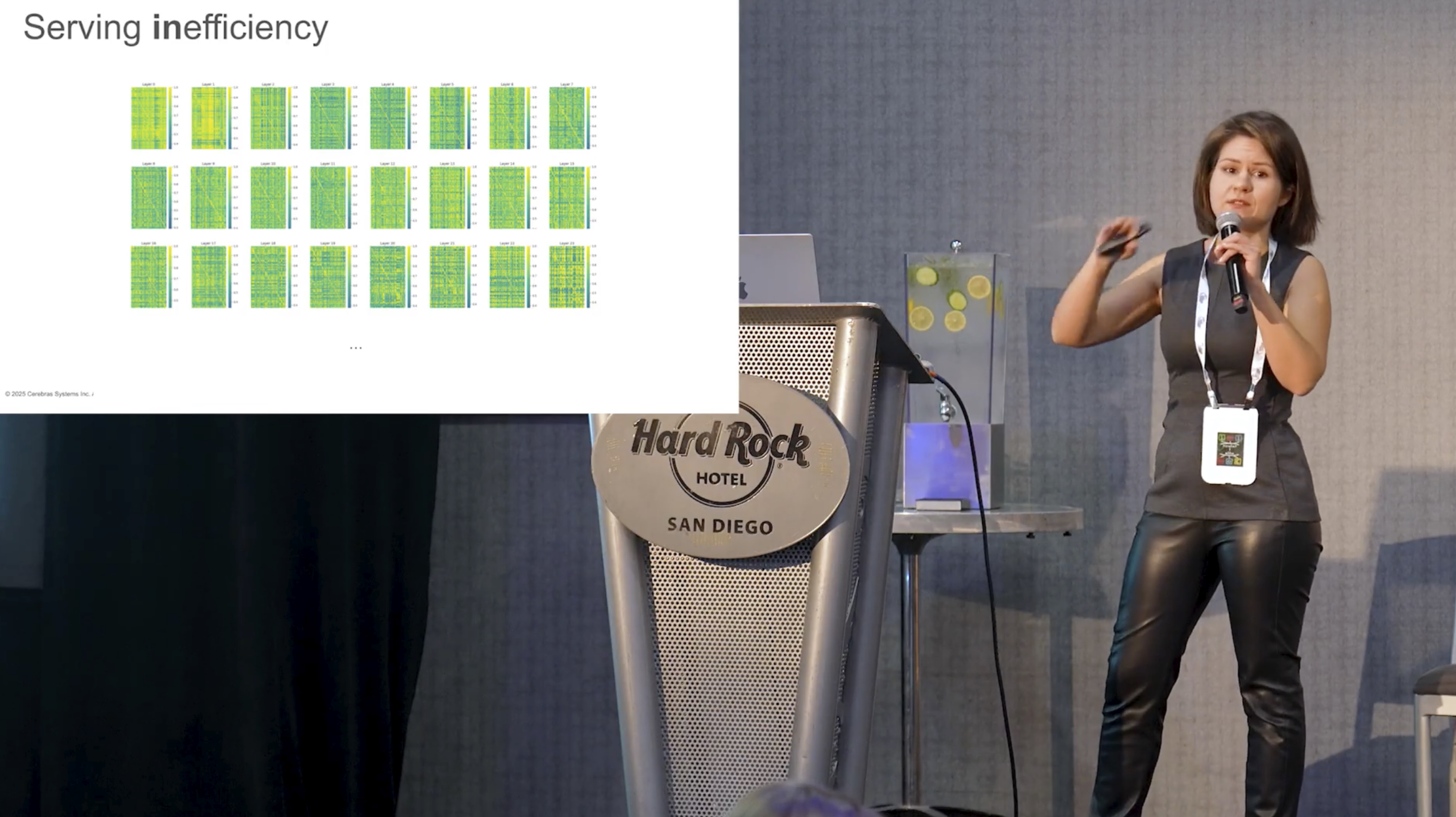

- Krishna Teja Chitty-Venkata, Sylvia Howland, Golara Azar, Daria Soboleva, Natalia Vassilieva, Siddhisanket Raskar, Murali Emani, Venkatram Vishwanath, MoE-Inference-Bench: Performance Evaluation of Mixture of Expert Large Language and Vision Models, SC, 2025 [paper]

- K2 Team (incl. Daria Soboleva), K2-V2: A 360-Open, Reasoning-Enhanced LLM, arXiv, 2025 [paper]

- Shane Bergsma, Nolan Dey, Gurpreet Gosal, Gavia Gray, Daria Soboleva, Joel Hestness, Power Lines: Scaling Laws for Weight Decay and Batch Size in LLM Pre-training, NeurIPS, 2025 [paper]

- Shane Bergsma, Nolan Dey, Gurpreet Gosal, Gavia Gray, Daria Soboleva, Joel Hestness, Straight to Zero: Why Linearly Decaying the Learning Rate to Zero Works Best for LLMs, ICLR, 2025 [paper]

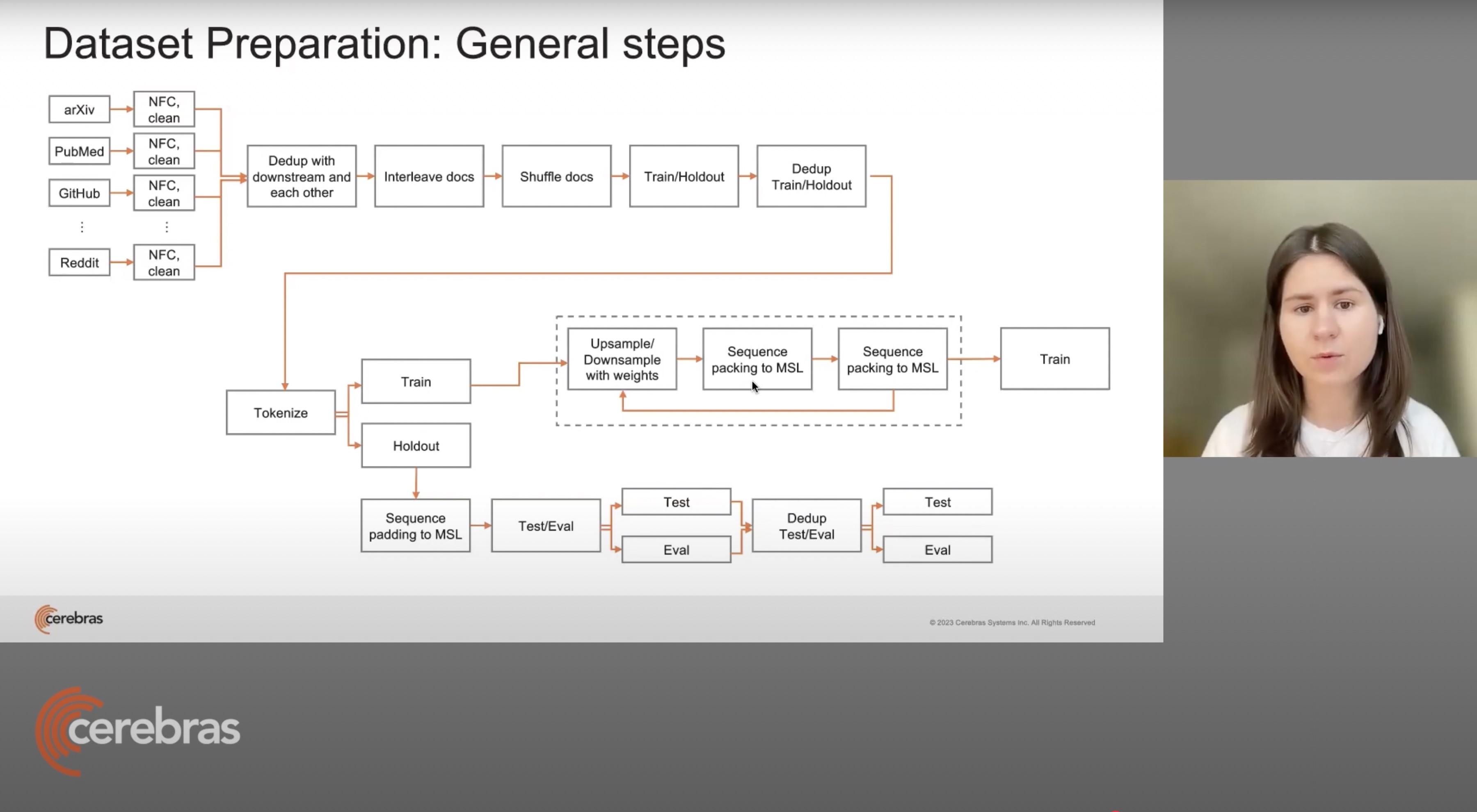

- Daria Soboleva, Faisal Al-Khateeb, Robert Myers, et al., SlimPajama: A 627B token cleaned and deduplicated version of RedPajama, 2023 [dataset] [blog post] [github]

- Nolan Dey*, Daria Soboleva*, Faisal Al-Khateeb, et al., BTLM-3B-8K: 7B Parameter Performance in a 3B Parameter Model, Efficient Natural Language and Speech Processing, NeurIPS, 2023 (* Equal contribution) [model] [paper] [Davis Bialock's roundup] [Jeremy Howard (fast.ai)]

- Faisal Al-Khateeb, Nolan Dey, Daria Soboleva, Joel Hestness, Position Interpolation Improves ALiBi Extrapolation, arXiv, 2023 [paper]

- Zhiqiang Shen, Tianhua Tao, Liqun Ma, Willie Neiswanger, Joel Hestness, Natalia Vassilieva, Daria Soboleva, Eric Xing, SlimPajama-DC: Understanding Data Combinations for LLM Training, arXiv, 2023 [paper]

- Daria Soboleva, Ondrej Skopek, Márius Šajgalík, et al., Replacing Human Audio with Synthetic Audio for On-device Unspoken Punctuation Prediction, ICASSP, 2021 [paper]

Patents

- Aleksandr Boymel, Daria Soboleva, Multi-phase training of machine learning models for search ranking, Filed, 2022 (Yandex) [patent]

Also on Google Scholar